Packet Requirements

When we converge voice, video and data on the network it is important for us understand the characteristics of this traffic, our voice for example. It’s not very tolerant to packet loss, really the standard says no greater than 1% packet loss, we don’t want to have unusual delay, one way delay shouldn’t exceed 150 milliseconds and that’s in G.114 standard that you can read up on, but we really want to shoot for that goal if it creeps up a little bit, maybe even 200-210 we might be okay. Above that you’d really start to notice it. Video really follows that same standard, one-way 150 millisecond delay, no greater than 1% packet loss, we want to keep that in mind. Your data traffic on the other hand it really depends what’s the application, what type of data’s been transmitted, is it using TCP, is it using UDP, so it’s really hard to put your finger on what the packet requirements are for your data where we really know between our voice and our video what the requirements are. And later on we look at this to really work with quality of service tools and put them in place so that our packets get delivered, maybe the voice and video have priority over that data going across the network – that is a recommended standard for quality of service.

Advantages and Drawbacks

When we think about quality of service the whole reason that we have to put quality and service in place is because we don’t have unlimited bandwidth, unlimited bandwidth means money and we’re trying to economize and make do possibly with what we have. So the lack of bandwidth is what consequently causes all of the issues. Now if we’re congested 24/7 there is not a lot you can do, quality service been put in place is going to be really help things out, you’re going to have to have at least enough bandwidth under normal conditions and then if you had periodic times of congestion, now queuing mechanisms could take place and work in that environment to make sure that voice and video got out first and then the data was serviced. So lack of bandwidth is what causes delay, end-to-end delay, jitter which is the variation in time, because we might take a couple of different paths to reach the destination, that can cause jitter and then ultimately packet loss, this is the worst, we start dropping packets, as we mentioned above voice and video can’t tolerate greater than 1% packet loss, once that starts happening things start to sound tinny, choppy and in some cases might even be illegible, irrecognizable, when it comes to that audio or that video stream.

QoS Recommendations

Shortly – we don’t want our latency to go above 150 milliseconds, jitter should be no greater than 30 milliseconds and our loss it can’t be greater than 1% or it starts to become recognizable. Now the bandwidth requirements for your voice and video, they really going to be determined by what codec you chose and any additional overhead that may be necessary to transmit that traffic. With video streams what we do is let’s say the video stream requires 384 kilobytes per second, we say add 20% to that, that would be enough bandwidth for one of your video streams. So whatever that baseline is add 20% and that will give you enough wiggle room for that video traffic to go across.

Link Fragmentation and Interleaving

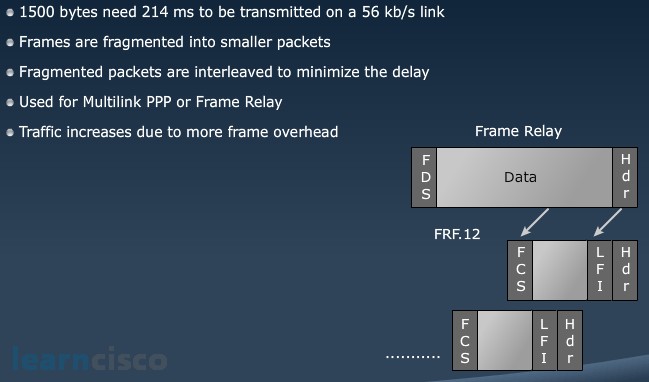

Link fragmentation and interleaving is a way that we could more efficient use of a link. We’re not really doing any kind of compression, we’re not doing any kind of queuing, what we’re doing is we’re saying let’s take a data packet that could be very large in size chop it up and interleave the tiny voice packets.

The given example, a 1500 byte packets going out an interface is going to need 214 milliseconds to be transmitted out of a 56 K link. Well our G.114 standard says no greater than a 150 millisecond for our voice packet, if I’m stuck behind that data packet I’m already in trouble, I’m already at 214 milliseconds and I’m not even at the interface yet. So that’s why Multilink PPP is what we would turn on to do this because Multilink PPP or even a frame relay mechanism, if you’re using frame relay would allow us to setup the link fragmentation and then interleave that voice traffic in between that packet. Now if you think about this it does cause an issue, if you think about okay I took this 1500-byte data packet that had one header, one footer and I’ve now chopped it up into several packets, I have now added a header and footer information to these packets, so I’ve actually increased the amount of traffic, but on slower speed links by chopping that up and interleaving the voice I’m fixing my latency issue. Latency is detrimental to voice traffic so better that we have more traffic, but I got out the interface quicker than to have less traffic and I don’t make it out that interface in a timely fashion.

Compression Methods

To make more efficient use of the link we could do even compression. We could do header compression for that RTP packet, we can do payload compression, because an RTP packet header is huge, it’s actually 40 bytes in size, we can reduce that down to 2 or 4 bytes and the 4 bytes is doing a little cyclical redundancy check on that packet, so we can reduce that header, then we can take G.729 and we could say for example on a frame relay link reduce it from 28K down to 14K, so header compression definitely has a good payoff for us. Payload compression also has a payoff as well, if we use something like G.729 for example and we get that good compression ratio for our voice which it sounds great by the way if you are wondering if I compress that voice packet is it going to sound bad, it’s so hard to tell difference. You probably couldn’t even tell the difference on most telephone conversations. So, compressing that payload also gives you a great payoff. Now if you are wondering well to do compression it takes CPU cycles and it may add to your overall delay, newer devices are going to be able to do it more efficiently and they have hardware assisted compression which will reduce the CPU load and the layer 2 payload compression delay. If you’re using a really, really, really old piece of gear that can’t use hardware compression, it has to use software compression then I might look at it again and I maybe I don’t do any type of compression because I’m going to add too much delay to the situation. But otherwise newer gear, hardware compression going to be the choice that I would make.

Our Recommended Premium CCNA Training Resources

These are the best CCNA training resources online:

Click Here to get the Cisco CCNA Gold Bootcamp, the most comprehensive and highest rated CCNA course online with a 4.8 star rating from over 30,000 public reviews. I recommend this as your primary study source to learn all the topics on the exam.

Want to take your practice tests to the next level? AlphaPreps purpose-built Cisco test engine has the largest question bank, adaptive questions, and advanced reporting which tells you exactly when you are ready to pass the real exam. Click here for your free trial.