Throughout the evolution of local area networks, we have seen how network efficiency and bandwidth utilization have become critical components and features of any local area network. PCs and servers are now capable of doing upwards of thousands of MIPS or millions of instructions per second. This means that they have more horsepower to generate more data and send more information to the network.

Network Congestion. Ethernet Hub vs. Bridge

In general terms, the shear volumes of information being transported in local and wide area networks increases exponentially. More bandwidth-intensive and bandwidth-hungry applications like video, multimedia, e-learning, collaboration, etc. impose stringent requirements on our networks.

We know that hubs do not resolve the network efficiency problem nowadays due to their nature as one collision domain and one broadcast domain per hub. They simply cannot handle or have the intelligence to handle large volumes of network traffic and the nature of traffic flows in our networks today. Bridges are an improvement. They still operate at layer 2 of the OSI model. They have the intelligence to forward filters or forward frames based on their knowledge of sources and destinations in the form of MAC address. Although they have fewer ports and are slower, they represent an improvement over hubs; in fact, they can create multiple collision domains, just like switches.

LAN Switch

Although the basic functionality of a switch is similar to that of a bridge, switches work at much higher speeds and have more functionality as compared to bridges. They will have higher port densities; typical access layer LAN switches include 28 to 48 ports. Distribution layer switches and high density access switches can support hundreds of ports. This makes them very scalable. They can also buffer larger frames and more information, making them less likely to drop frames while those frames are being processed. It is likely that they support a mixture of port speeds from 10 to 100 Mb/s into 1 Gb and 10 Gb/s especially for uplinks.

They include a switching fabric, which along with multiple collision domains or one collision domain per port allow for multiple conversations that take place at the same time and an increasingly high number of traffic flows that can concurrently use a switch. They can also be configured to support multiple switching modes. Store-and-forward is a more traditional one where packets are buffered completely before they are sent in order to process the packets and determine the outbound port. A more efficient mechanism is cut-through switching mode, in which packets are forwarded as soon as the switch can tell what the destination address is. This will happen even if the switch has not received the complete packet. A variant of this is fragment-free mode, which overcomes the issues related to potential errors and cut-through forwarding.

Bridges and switches are similar in that they connect LAN segments. They also learn MAC addresses to filter traffic and send it only to the appropriate port where the destination is located. However, switches have these features which make them more effective in alleviating congestion and making better use of bandwidth. There is a list here. The first one is a dedicated communication between devices, also known as micro segmentation,. and it’s possible because each device does not compete with other devices to access that link due to the fact that that port,each port in the switch, is a collision domain. So there is no competition at that point and theoretically no collisions occur.

Once you consider that, you may think that there is still competition, because each end of the link may try to transmit at the same time, while through the magic of full-duplex communications, each device can transmit at the same time, effectively doubling the capacity of that link. For example, a 100 Mb per second link has an effective capacity of 200 Mb per second.

Another important feature is the fact that switches allow multiple simultaneous conversations. And this is because of the what’s known as the switching fabric on the switch, which is nothing more than internal buffers that allow multiple pairs of ports to transmit frames at the same time, for example,. tThose who ports can be transmitting at the same time, and those who ports can also be transmitting at the same time. Eventually some switches allow for all ports to transmit all frames in all conversations at the same time, which is known to be a wire-speed nonblocking servers, and of course that makes the switch relatively more expensive. Finally, media it rate adaptation allows a switch to handle different speeds on different ports and broker the transmission of packets between those two ports. This allows a switch to be assigned, for example, an access,where multiple machines can have the smaller bandwidth or lower speeds, because they need only that speed, and at the same time, they can share a high-speed trunk for communications with the rest of the network.

Because of all these reasons, the switches supersede bridges. This is the network element of choice for local area networks today. Hubs and bridges do not make too much sense in today’s networks. Switches operate at layer 2 just like bridges, but will have many ports that are faster, as we have mentioned, and will have the intelligence to forward filter or flood frames. When the switch receives a frame, it will look up its MAC address table and try to match the destination MAC address of the frame. If it finds a match, then it will forward a frame only on the port where the destination resides. If it does not, then it will flood the frame through all ports except for the incoming port.

Switching Frames

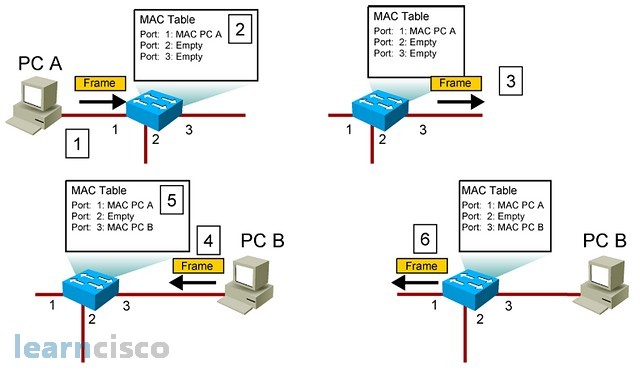

This is a more visual description of the process. Step 1, PCA sends a frame to the switch. The switch will compare the destination MAC with its MAC address table and it will find that it is not there. Before flooding the frame through all ports, it will notate that PCA is on that port by including its MAC address in the table. On step 3, the frame is broadcasted and flooded across all ports. On step 4, the intended destination receives the frame and it notices a match with its own MAC address. It will then reply and at that point on step 5, the switch will listen to the reply and insert the MAC address of PCB, in this case, in the MAC address table. On step 6, the switch is only forwarding and filtering. In other words, it will forward the frame only on port 1, which is the one where PCA resides according to the MAC address table.

We know that all devices connected to a switch can see each other in a network segment. In today’s local area networks, though, the connectivity requirements span across multiple work groups and consolidation and centralization trends require workgroups to either be connected between each other or have access to central resources in the form of server farms and internet access. That is why we create campus networks made up of a hierarchy of switches interconnected by high speed links. Thousands of users can be connected to a single flat network represented by one broadcast domain.

Again, a switch represents a broadcast domain, and if you interconnect multiple switches initially, the whole hierarchy represents one broadcast domain; that means one broadcast sent by one user in one work group could be seen by the whole network. This will degrade performance and have an impact on network and bandwidth utilization.

VLAN Overview

Enter VLANs as a way to create multiple broadcast domains in the same switch or hierarchical switches. A VLAN is a virtual LAN that improves performance by creating one broadcast domain. This is called segmentation. A broadcast generated by machine in one VLAN will only affect other devices in the same VLAN. It will not span across other VLANs. Each broadcast domain or VLAN is considered an IP segment or an IP subnet. This means that in order to communicate between VLANs you will need layer 3 functionality, in other words, a router. This makes them also a security tool because in order for traffic to span across VLANs, get from one VLAN to the other, it will need to go across a router and you can build your security access control on the router.

The way to implement VLANs is by assigning switch ports to specific VLANs. This effectively decouples the physical location of the device from the logical virtual LAN that the device belongs to. In other words, you could have that device on the third floor belonging to the blue VLAN, and that device on the first floor belonging to the same blue VLAN. They are, in fact, connected to different switches but the whole hierarchy of switches will have knowledge of the VLAN definition. This creates a more flexible environment; not only the physical location is no longer important, but also moves, adds, and changes are easier to implement. If I want to change to a different work group, all I need to do is change the VLAN on the switch port where the device is connected.

Our Recommended Premium CCNA Training Resources

These are the best CCNA training resources online:

Click Here to get the Cisco CCNA Gold Bootcamp, the most comprehensive and highest rated CCNA course online with a 4.8 star rating from over 30,000 public reviews. I recommend this as your primary study source to learn all the topics on the exam.

Want to take your practice tests to the next level? AlphaPreps purpose-built Cisco test engine has the largest question bank, adaptive questions, and advanced reporting which tells you exactly when you are ready to pass the real exam. Click here for your free trial.